Prompting Is Programming

15 March 2026

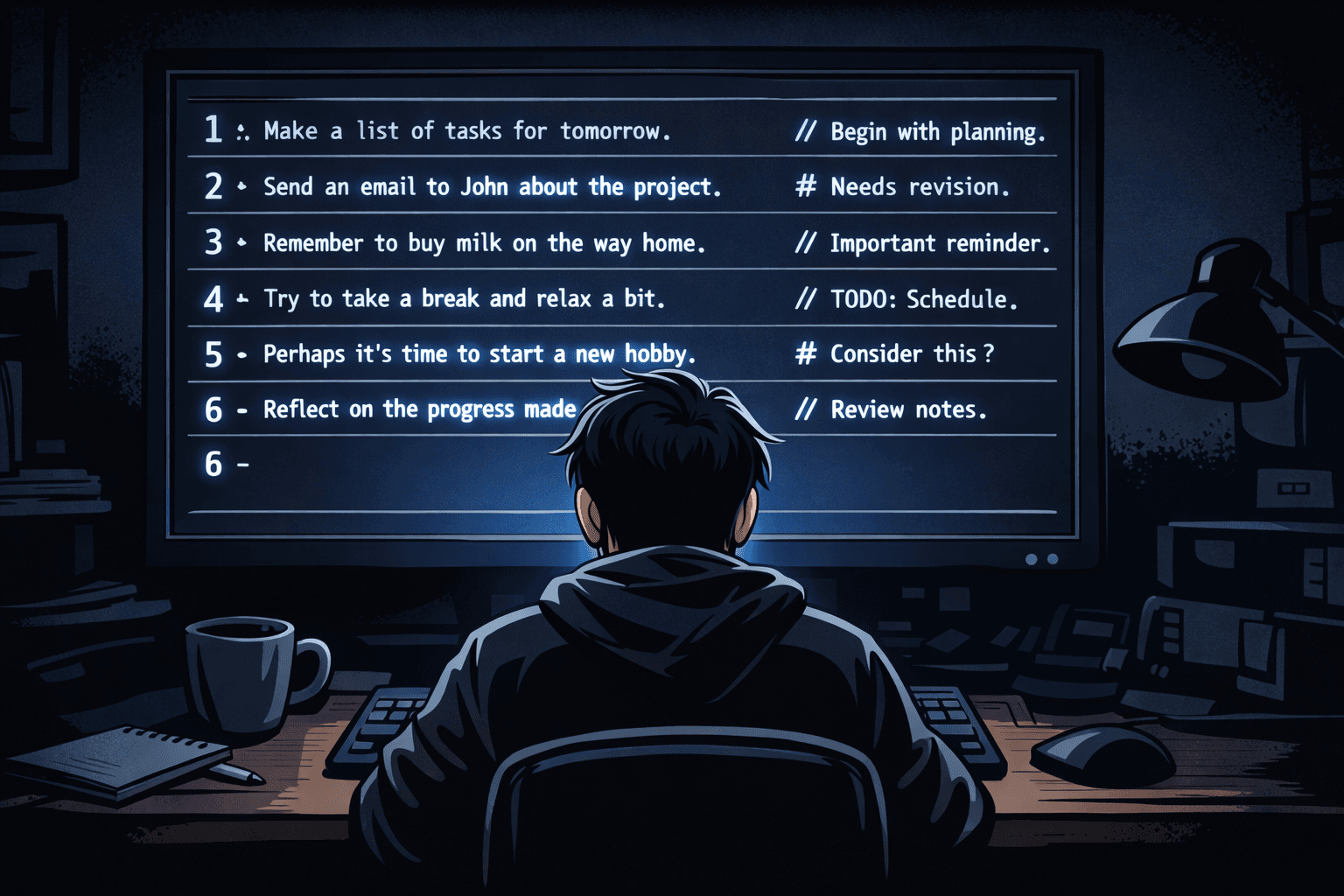

Most developers approach prompting the way non-developers approach spreadsheet formulas: type something in, see what comes out, tweak it until it mostly works, stop thinking about it. The result is prompts that are fragile, hard to reason about, and impossible to improve systematically.

That's the wrong mental model. Prompting is programming. Not a metaphor — the same underlying discipline applies. The feedback loops are shorter, the syntax is natural language, but the engineering principles are identical.

The debugging mindset

When code produces the wrong output, you don't just rephrase it and hope. You form a hypothesis about why it's wrong, test the hypothesis, and revise your understanding of what's actually happening. Prompting works the same way.

The common failure mode is random iteration: change a word, add a sentence, restructure the paragraphs, run it again. This produces results eventually, but it teaches you nothing and leaves you with a prompt you can't reason about. If the model starts giving you a different answer tomorrow — because the context changed, or you're in a different conversation, or you added something upstream — you won't know what to fix.

The better approach: treat unexpected output as a bug. Ask what the model "knows" at the point it generates that output. What have you told it? What have you not told it? What might it have inferred incorrectly from what you've given it? Usually the bug is one of three things: missing context, ambiguous instruction, or a contradiction between what you said earlier and what you're asking for now.

Context is scope

In a program, a variable has a scope — the region of code where it's defined and accessible. In a prompt, context works the same way. Everything the model has access to at the moment of generation is its scope. What you said three exchanges ago is still in scope. What's in the system prompt is in scope. What you assumed was obvious but didn't write down is not in scope.

Most prompt failures are scope failures. The model gave you something generic because your context was generic. It didn't do what you wanted because you described the outcome, not the constraints. It contradicted something you said earlier because you introduced a conflict and didn't resolve it explicitly.

Getting good at prompting is largely getting good at managing context — knowing what the model needs to know, when it needs to know it, and what to remove when the context gets too noisy.

Specificity is everything

Good function names are specific. getUsersByCreatedDateDescending is better than getUsers because it encodes intent and constraints that aren't obvious from the data structure. Vague names produce vague behavior.

Prompts work identically. "Write me a blog post about X" produces a blog post about X the way the model expects blog posts to look — generic, safe, structured like every other blog post it's been trained on. "Write a blog post about X for an audience of senior engineers who are skeptical of the premise, in the second person, leading with a counterintuitive claim" produces something you can actually use or edit into shape.

The specificity isn't about being controlling — it's about communicating intent. The same intent you encode in a type signature or a function name needs to go somewhere in a prompt. If you leave it out, the model fills the gap with its default, which is usually not what you want.

Abstraction and reuse

If you find yourself writing the same paragraph of context at the start of every conversation, that's a candidate for abstraction. System prompts exist for this reason — they let you define standing context once and reuse it across interactions. The same principle applies at a smaller scale: if a particular framing reliably improves outputs, write it down and use it deliberately rather than reconstructing it from scratch each time.

This is also where the engineering discipline separates from the vibe-based approach. A developer who builds up a library of useful patterns — specific framings that work, context-setting techniques that reduce ambiguity, output formats that are easy to parse and edit — gets compounding returns. Someone who prompts by feel restarts from zero every time.

Iteration has a direction

The last thing that distinguishes programming from guessing is that iteration has a direction. When you debug code, you're moving toward a specific, verifiable outcome. You know when you've succeeded because the tests pass, the error disappears, the behavior matches the spec.

Prompts need the same thing: a definition of done. What does a good output actually look like? If you can't describe it before you run the prompt, you'll accept whatever comes back and call it close enough. If you can describe it precisely, you can iterate with purpose — each run tells you something specific about what the model understood and what it didn't.

Prompting is a skill, and like most skills, the people who improve fastest are the ones who treat it as something to understand rather than something to perform. The model is a system with inputs and outputs. The inputs are mostly in your control. The debugging tools are the same ones you already have: hypothesis, experiment, observation, revision.

You already know how to do this. You do it every day.